AI in South African FinTech - Pulse Survey Report

- FINASA

- 3 hours ago

- 6 min read

Executive snapshot

For the 2026 FSCA Annual Conference (18–19 March), the Fintech Association of South Africa (FINASA) ran an exclusive member pulse survey on the state of AI in financial services. The survey drew 44 responses, predominantly from C‑suite leaders and founders across payments, lending, banking, insurance, wealth, and regtech.

The results show a sector that is already using AI at scale in core operations, expects AI to be mission‑critical within three years, but is navigating regulatory uncertainty and uneven governance maturity.

Who responded: a senior, systemically relevant sample

This was not a general consumer poll; it was a focused pulse of decision‑makers engaged with South Africa’s regulatory and fintech agenda at the FSCA Annual Conference.

50% of respondents were C‑suite or founders, with a further cohort at Exco‑1, heads of function and senior managers.

Participants span the value chain: payments (13), regtech/compliance (6), wealth and investments (5), lending/credit (3), insurance (3), banking platforms (3), and a diverse “other” segment including digital asset infrastructure, law firms and financial literacy platforms.

Company stages range from pre‑Series A fintechs to listed and large incumbents, with roughly half of responses from early‑stage firms and half from growth/large institutions.

This mix reflects FINASA’s role as the industry body for fintech in South Africa, convening startups, incumbents, and ecosystem partners in one conversation with regulators and policymakers.

Adoption of AI in South Africa FinTech: AI is no longer experimental

Across respondents, overall AI adoption averages 3.45 out of 5, indicating that AI is already embedded in multiple functions for many firms.

On a maturity lens, 41% report “a few live use cases in production”, 32% have “multiple scaled use cases across the business”, and 14% say “AI is embedded in most products and processes”.

No respondent reports “no AI activity”; the baseline is at least some experimentation or live use.

The dominant current use cases cluster around risk, customer interaction, and productivity:

Customer support (chat, email, call assist): 24 organisations.

Internal productivity (code, content, analysis): 23 organisations.

Fraud / AML / transaction monitoring: 20 organisations.

Marketing / acquisition / pricing: 17 organisations.

More specialised use cases like credit scoring, collections, trading/treasury, and compliance/surveillance are present but less ubiquitous, pointing to a layered adoption pattern: AI first augments service and internal efficiency, then extends into regulated decision‑making and capital‑intensive domains.

Looking ahead, respondents expect AI to become mission‑critical: the expected importance of AI in three years scores an average 4.39 out of 5, with a majority rating it at level 4 or 5.

Risk, governance and the “grey area” reality

While adoption is advanced, risk understanding and governance have not fully caught up.

Risk understanding and sentiment

Respondents rate their understanding of AI risks at 3.36 out of 5, signalling moderate but incomplete grasp of the risk landscape.

The highest perceived risk areas are data privacy and security (37) and cybersecurity/adversarial attacks (19), followed by third‑party/vendor risk, bias/fairness, and operational resilience.

Concerns about unintended consequences from agentic AI average 3.57 out of 5, with a sizeable cluster at the “very” or “extremely concerned” end of the spectrum.

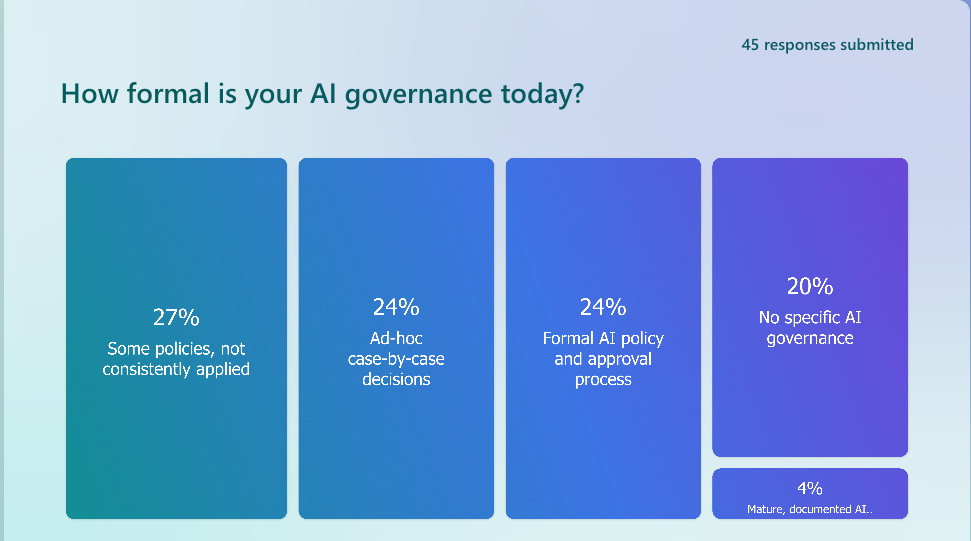

On governance:

Only 2 organisations report “mature, documented AI governance with regular review”.

The majority sit in the middle: “some policies, not consistently applied” (12) and “formal AI policy and approval process” (11).

19 respondents still rely on no specific AI governance or ad‑hoc case‑by‑case decisions.

This creates a recognisable “go‑fast but nervous” posture:

The average score on “How much does your AI use feel like a grey area / Wild West?” is 2.86 out of 5.

Internal accountability if an AI‑driven decision causes harm is only moderately clear, scoring 3.14 out of 5, with a spread from “not clear at all” to “very clear”.

Narrative comments reinforce this tension: respondents talk about using AI as a strategic enabler in controlled pilots, prioritising privacy and fairness, but also highlight governance gaps, skills constraints, and uncertainty about where accountability sits when AI‑influenced decisions cause customer harm or financial loss.

Regulatory clarity: strong demand for guidance, not a brake on innovation

A central theme of this FSCA‑linked pulse is the call for clearer, more structured guidance on AI in financial services.

Current clarity and stance

Respondents score the clarity of current South African regulatory expectations on AI at just 2.16 out of 5, with no one selecting “very clear”.

Views on the current regulatory stance vs innovation are mixed but generally moderate: 36% perceive it as balanced between safety and innovation, 18% as mainly constraining, 11% as too permissive, and 34% are unsure.

The highest areas where firms need regulatory clarity are:

Data usage, sharing and privacy for AI: 30 mentions.

Agentic / autonomous AI acting on behalf of staff/customers: 20 mentions.

Use of third‑party / big‑tech AI models: 19 mentions.

Customer communications and chatbots, model risk management and validation: 15 each.

The priority level for engagement with regulators on AI over the next 12–18 months averages 3.09 out of 5, signalling that firms see regulatory dialogue as important but unevenly prioritised across the ecosystem.

What industry is asking regulators for

When asked how valuable different forms of support would be over the next 12–18 months, respondents lean strongly towards collaborative, principles‑based tools.

The most valued items include:

A regulator‑backed AI sandbox to test innovations safely.

Clear guidance and principles on AI use in financial services.

Model governance and validation templates and industry playbooks/case studies on responsible AI.

Standard clauses for AI‑related third‑party contracts and regular FINASA–regulator roundtables on AI.

Free‑text comments underline this: several respondents explicitly call for agile, sandbox‑driven frameworks where regulation “follows innovation” while maintaining safe harbour for consumers and the market.

Agentic AI: from concept to controlled production

Agentic or autonomous AI – systems that can act on behalf of staff or customers – is no longer theoretical.

41% of respondents are “exploring conceptually”, 27% are running small experiments, and 23% already have agentic AI live in limited production use.

The main use cases envisioned are customer service workflows (33), operations/back‑office automation (26), and KYC/onboarding/case handling (26), with strong interest in internal employee agents (copilots).

At the same time, concern levels are high and governance is uneven, which is why agentic AI features prominently in the list of topics where regulatory clarity is most needed.

Attitude to AI: cautious optimism, not hype

Overall sentiment towards AI is balanced but forward‑leaning.

Respondents describe their organisations using terms such as:

“Balanced risk and opportunity” and “moderately cautious, selective adoption”.

A growing cluster of “opportunistic and fast‑moving” and “all in on AI” organisations, particularly among fintech and regtech founders.

A smaller group of “highly cautious and slow‑moving” incumbents, often linked to complex legacy systems and stricter internal risk appetites.

Qualitative responses speak to ongoing internal education campaigns, cross‑functional AI conversations, and a desire to leverage AI for productivity and inclusion while avoiding governance failures and customer harm.

What this means for the FSCA, FINASA and the ecosystem

For the FSCA and other regulators:

The sector is already using AI in consequential areas – fraud, underwriting, customer engagement, and operations – not just in low‑stakes experiments.

Firms are not asking for a free‑for‑all; they are asking for practical guidance, shared templates and sandbox environments that allow responsible experimentation with clear guardrails.

Priority topics for joint work include data usage and privacy, third‑party model reliance, agentic AI, model risk management, and board/senior management responsibilities.

For FINASA:

These findings validate FINASA’s focus on regulatory working groups and structured engagement with SARB, FSCA, PASA, NCR and National Treasury on AI‑related topics.

There is a clear mandate for FINASA to convene member‑regulator roundtables, co‑develop principles and templates, and advocate for a regulator‑backed AI sandbox that reflects South Africa’s specific risk profile and inclusion agenda.

As the industry body for fintech in South Africa, FINASA can translate these pulse insights into sustained programmes of work – from responsible AI playbooks and case studies to specialised forums on agentic AI, alternative credit scoring, and AI‑driven customer communications.

For industry leaders:

The survey underscores a first‑mover advantage for firms that match their pace of AI innovation with robust governance and clear accountability.

There is an opportunity to co‑shape the rules of the game: by engaging through FINASA and FSCA processes, firms can help ensure that South Africa’s AI framework protects consumers while enabling innovation and investment

Comments